Building the Future of Cloud-Native Software on a Multi-Architecture Infrastructure

Over the past few years, cloud-native developers have been embracing multi-architecture infrastructures, which can run workloads on either Arm or x86 architectures. This allows workloads to run on the best hardware for the task without developers having to worry about the underlying instruction set.

This shift to multi-architecture infrastructures is driven by a number of factors, including the need for greater efficiency, cost savings and sustainability in cloud computing. This invariably has meant adding multi-architecture best practices to software development, typically by adding Arm architecture support to containers and deploying these containers on hybrid Kubernetes clusters with x86 and Arm-based nodes.

Multi-Architecture Growth for Cloud-Native Projects

Within the CNCF Graduated projects, over 80% of projects provide native support for the Arm-architecture and an increasing number of Incubated and Sandbox projects are adopting multi-architecture software development practices.

Embrace Next-Generation Infrastructure to Run Your Code

There are several benefits that developers achieve by adopting multi-architecture build practices, such as:

Achieving better price/performance ratios for targeted cloud deployments. Controlling the cost of cloud computing remains a challenge. Prices can change quickly and unexpectedly, suddenly making it less affordable to run the same workloads. Making changes to a workload can trigger unanticipated charges, and even small inefficiencies can add to infrastructure costs over time. When working to minimize the infrastructure budget, optimizing the price/performance ratio of hardware operations becomes an important tool for generating savings. The Arm architecture, which is recognized for its low-power operation and efficient processing, can help reduce costs by making hardware processing more affordable. In side-by-side comparisons, Arm Neoverse platforms have consistently shown they work more efficiently than their x86 counterparts. As a result, developers have found that running some or all their workloads in the Arm architecture is an effective way to increase efficiency, and thereby optimize price/performance ratios.

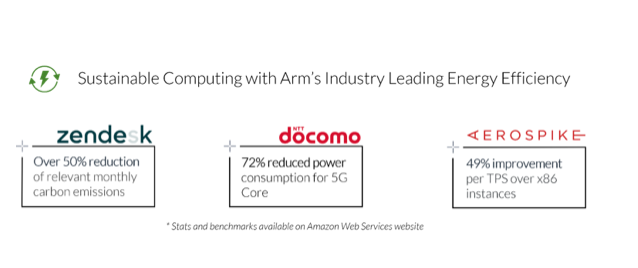

Contributing to environmental sustainability by using energy-efficient Arm-based cloud instances available at all major cloud providers. Sustainability is increasingly important for organizations globally, and it’s important to realize the key role that making software ecologically sustainable has in progressing toward carbon neutrality goals. Within the CNCF, there is increasing focus on defining and implementing best practices for the open source community to improve its overall sustainability footprint via the Environmental Sustainability Working Group. Having software development be more multi-architecture in nature allows us to leverage these efficiencies across the hardware and software stack.

Gaining optimal footprint for software deployments at edge and IoT. Requirements for running cloud-native applications in the data center and the cloud are different from running them at edge locations. With the exponential growth in IoT-driven data and the need for processing data much closer to the source, developers need to consider factors such as latency, network bandwidth, and hardware resourcing footprint, including CPU, RAM and power consumption. Arm-based platforms at the edge are very diverse in nature and cover deployments across all industries, such as retail, manufacturing and industrial IoT, transportation, utilities and beyond. For example, we have showcased a fully functioning AngularJS-based app deployed on a Kubernetes cluster hosted on Raspberry Pi, with Linkerd service mesh and K3s as the orchestrator in this blog. In this use case, on an 8GB RPi, Linkerd consumed ~415MB and K3s ~650MB, while together, they consumed only around 20-25% of total CPU utilization, leaving plenty of resources for the application to use.

Getting Started

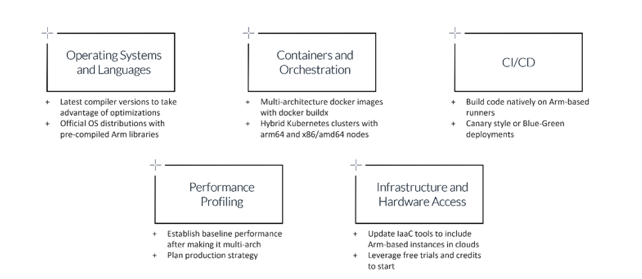

These are some of the common best practices we have assembled based on our engagements in enabling cloud-native projects to be multi-architecture:

Operating Systems and Languages

All major OS distributions and languages support Arm’s Neoverse platforms. It is recommended to leverage the latest official distributions and the latest versions of languages and runtimes. The following are some language-specific best practices to consider:

- C/C++: While building C++ source code with compilers like GCC (GNU Compiler Collection) or Clang/LLVM (Low Level Virtual Machine), using the latest version of the compiler provides better support and performance optimizations for Arm Neoverse-based platforms. All major Linux distributions provide packages for newer versions of these toolchains, for example, gcc-13 (Debian) or gcc-toolset-13 (Fedora). To further improve the performance of your C++ source code, you should consider using the -mcpu=native flag. This will build a binary specific to the processor you are currently running on, making use of the latest features of the Arm Neoverse platform.

- Java: Java programs are compiled to bytecode that can run on any JVM regardless of underlying architecture without the need for recompilation. All the major flavors of Java are available on Arm Neoverse-based platforms. Some examples include – OpenJDK, Amazon Corretto and Oracle Java. To achieve optimal performance from your application, check if the source code has architecture-specific shared objects in JAR libraries that use a JNI to call the native library. Unzip the JAR file and check if any arm64 object is missing.

- .NET: .NET framework 5 and onwards support Linux and Arm64/aarch64-based platforms. Each release of .NET brings more optimizations for Arm Neoverse-based platforms. Please refer to this.NET specific enablement document

- Go: Like other languages, using the latest go compiler (currently v1.21) improves the performance of many applications

Containers and Orchestration

While building multi-architecture Docker images, use the official Linux base images as a starting point. Docker provides a CLI plugin called buildx included in Docker Desktop for Windows and MacOS. Use docker buildx to create multi-architecture images that can run on both x86/amd64 and arm64 platforms. Major container registries like Docker Hub, Amazon ECR, Google Artifact Registry, Azure Container registry, Quay etc. support multi-architecture images. Additionally, for deploying multi-architecture images, most of the container orchestration platforms support both x86/amd64 and arm64 hosts. You can use any of the managed Kubernetes services – Amazon EKS, Azure EKS, Google GKE, Oracle OKE – or build your own Kubernetes cluster.

CI/CD

CI/CD pipelines are crucial in automating the build, test and deployment of multi-architecture applications. You can build your application code natively on Arm in a CI/CD pipeline. For e.g., GitLab and GitHub have runners that support building your application natively on Arm-based platforms. If you already have an x86-based pipeline, you can start by creating a separate pipeline with arm64 build and deployment targets. Identify if any unit tests are failing. Use Blue-Green deployments to stage your application and test the functionality. Alternatively, use Canary-style deployments to host a small percentage of traffic on Arm-based deployment targets for your users to try.

Performance Profiling

Once you have your application stack built and fully functional for Arm, establish baseline performance. Open source performance analysis tools like perf and eBPF work well on Arm. Monitor the application closely to ensure expected behavior. Fix any drops in performance by investigating individual components. Once the application is running as expected, plan a transition strategy for production.

Infrastructure/Hardware Access

For production deployments, update your existing infrastructure-as-code (IaC) tools to add Arm-based instances. For software developers getting started on their multi-architecture enablement journey, we provide access to free Arm-based hardware through our Works on Arm initiative. This includes free cloud instances provided by our cloud service provider partners and full bare metal servers provided by Arm for cloud-native projects to get started.

Resources

For developers seeking to learn more about technical best practices for developing on Arm, we provide multiple resources including our learning paths that provide ‘how-to’ guides on a variety of topics. To gain exposure to how other developers are approaching multi-architecture development, check out Arm Developer Hub, where you get access to on-demand webinars, events, Discord channels, training, documentation and more.

And, for those who plan to be at KubeCon/CloudNativeCon North America in Chicago during November 6-9, 2023, please stop by the Arm booth#L4 to learn more or drop us an email.