FeedStock Employs Connectors Based on Containers to Aggregate Data

A platform from FeedStock that applies machine and deep learning algorithms to generate insights from unstructured data is employing containers to simplify the collection of data. Recently certified by Red Hat, the FeedStock Connector is now available via the Red Hat Container Registry as part of the Red Hat Ecosystem Catalog.

FeedStock CTO Marco Paolini says connectors based on containers will play a critical role in reducing the total cost of data management by making it possible to standardize the way data is shared across loosely coupled systems.

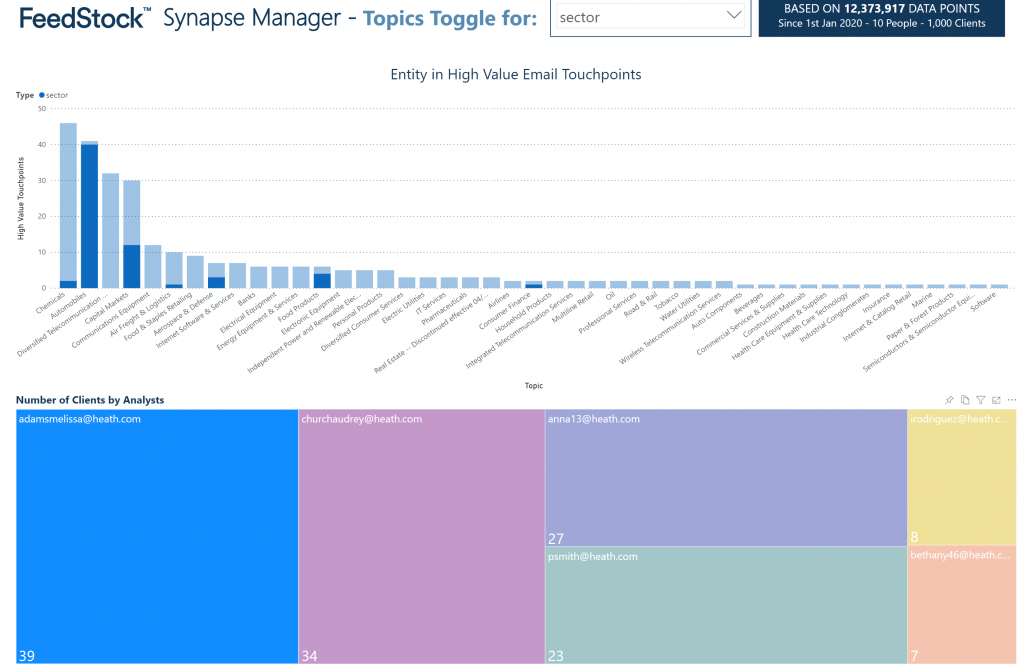

At a time when many organizations are trying to derive value from data, it turns out capturing that data in a format that makes it easier to consume is a significant challenge. In the case of FeedStock, connectors make it easier to pull data into a cloud-based analytics application running on Amazon Web Services (AWS). The company uses the connector to pull data from email systems and other sources of unstructured data, then algorithms analyze that data to surface next-best recommendations for sales professionals in real-time. That approach allows FeedStock to correlate data involving customer interactions that could span hundreds of systems to also surface potential compliance and security issues in real-time, notes Paolini.

Much of that data today is essentially dark data that organizations would otherwise not be able to operationalize in any meaningful way, he adds.

It’s not clear just yet to what degree container technology may transform connectors specifically and middleware in general. It is apparent, however, that containers present a standard set of interfaces that make it simpler for backend applications to consume a wide variety of data generated by any number of applications.

Most digital business transformation initiatives pre-suppose organizations will be able to organize relevant customer data in a way that surfaces actionable insights. In practice, most of that data is stored in isolated applications. Correlating and analyzing data typically involves a massive effort to normalize data. Containers are emerging as a critical tool in that effort.

Of course, it might be a while before connectors based on containers are pervasive across an enterprise. However, much like the rest of the application environment, IT teams should assume that existing connectors will be modernized using containers. In fact, once connectors are based on containers, it’s likely there will be a lot more of them employed across an enterprise.

Connectors based on containers should also prove easier to update as containers are ripped and replaced to add additional functionality over time.

The movement of data is often considered the root of all IT evil. As data is moved it needs to be secure while in transit, which often makes it susceptible to cybersecurity attacks. However, the movement of data is also unavoidable. As such, it should be done using standard interfaces as expeditiously as possible to minimize any potential friction.