VMware Extends Tanzu to Simplify Application Development

VMware has added a large language model (LLM), available in beta, that it has trained to bring generative artificial intelligence (AI) capabilities to the Tanzu platform, along with extensions to agent software based on the company’s machine learning algorithms to now make it simpler to set up the VMware Tanzu GemFire Vector DB Extension that can be used to extend the capabilities of an LLM.

In addition, VMware has added support for a Spring runtime for Java applications to Tanzu along with Deployment Frequency and Lead Time for Changes metrics based on the DevOps Research and Assessment (DORA) framework defined by Google.

The Tanzu Hub, meanwhile, now has a revamped user interface along with integrations with the Tanzu Insights observability platform and the Tanzu CloudHealth management tools. VMware has also enhanced Intelligent Assist in Tanzu to make it simpler to search external sources to help diagnose and resolve issues.

VMware also has a GreenOps tool, in beta, for tracking energy consumption, along with a VMware Tanzu Guardrails tool to simplify governance and compliance. There is also now a VMware Tanzu Application Catalog for tracking open source artifacts and viewing an inventory of software bills of materials (SBOMs).

The company is also making available a technology preview of VMware Tanzu Data Hub to streamline the management of VMware Tanzu and data services across multiple clouds and Kubernetes environments.

Finally, VMware has also added support for Postgres databases to the database-as-a-service (DBaaS) platform it provides for Tanzu and support for the Oracle Cloud VMware Solution to improve availability of Kubernetes clusters.

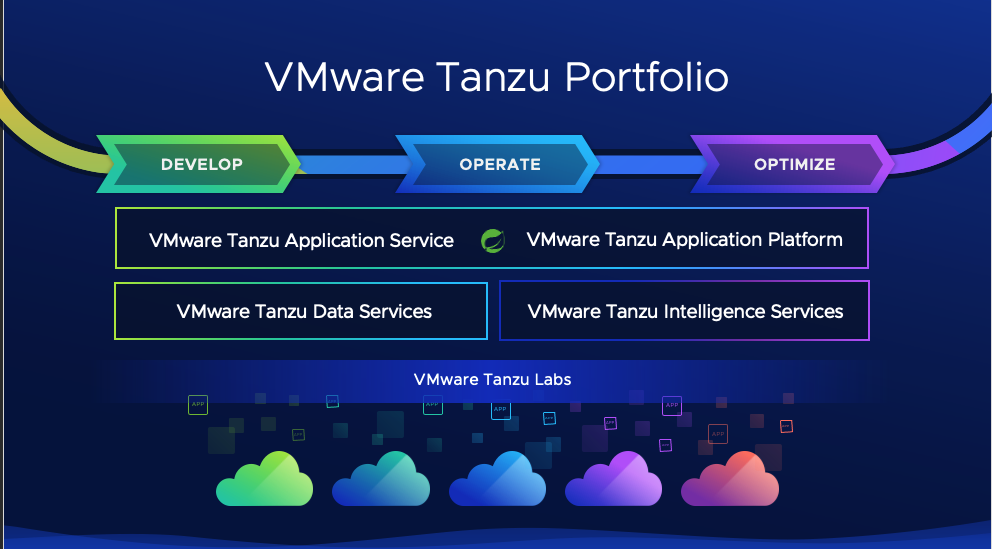

Betty Junod, vice president of marketing for the Modern Apps and Management Business Group at VMware, said VMware is pursuing a three-tier approach based on managing core Kubernetes infrastructure, the data services required by applications and additional intelligence services for optimizing the overall environment. Kubernetes today is essentially plumbing that requires a range of capabilities to make it feasible to build and deploy cloud-native applications in an enterprise computing environment, she added.

The overall goal is to provide the developers that build these applications with a better experience, said Junod.

In general, the rate at which applications are being deployed in Kubernetes environments is increasing, thanks in part to the rise of AI applications. Most of the applications being infused with AI models are being constructed using containers, which makes them easier to build, deploy and maintain.

Arguably, the biggest inhibitor to building cloud-native applications has been the tools provided to developers. VMware is making a case for a centralized approach to managing the overall Kubernetes environment to enable IT teams to reduce the cognitive load that developers would otherwise need to build and deploy applications.

It’s not clear at what rate organizations are centralizing the management of Kubernetes environments, but with the rise of platform engineering as a methodology for managing DevOps workflows at scale, there is a lot more interest. The challenge, as always, is determining which services to make available to which developers based on the nature of the applications being created.