The Missing Control Plane in Cloud-Native Supply Chains

Kubernetes has fundamentally changed how modern platforms deploy and operate software. With GitOps, declarative APIs, and a rich CNCF ecosystem, teams can scale infrastructure and applications with unprecedented consistency.

Yet as Kubernetes adoption matures, many organizations encounter a growing bottleneck outside the cluster that directly affects reliability, performance, scalability, cost efficiency, security, and developer velocity: Artifact access.

Container images, Helm charts, OCI artifacts, and pipeline outputs form the backbone of cloud-native delivery. However, the systems governing how these artifacts are accessed, authorized, and promoted remain fragmented. As a result, teams struggle to scale Kubernetes platforms without accumulating policy sprawl, duplicated controls, and brittle delivery pipelines.

This article explores why Kubernetes needs an artifact access plane (that also addresses governance enforcement), how virtual registries help address this gap, and how this model aligns with CNCF supply-chain initiatives.

From GitOps Simplicity to Artifact Complexity

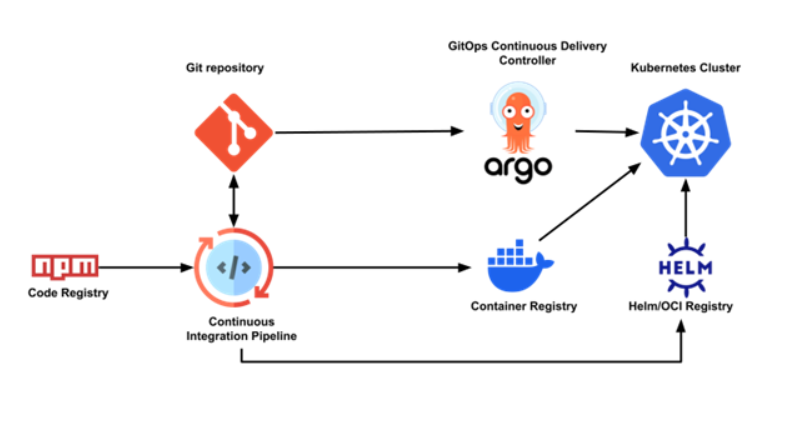

GitOps abstracts deployments into a clean model: Desired state stored in Git, continuously reconciled into clusters. But behind that abstraction lies a rapidly expanding artifact graph.

A typical Kubernetes deployment may depend on container images from multiple registries, Helm charts or OCI bundles, policy artifacts, operators and controllers, and sidecar binaries or runtime extensions.

Each artifact is retrieved from an external system, often governed by different access rules, trust models, and promotion processes.

Figure 1: Artifact Dependency Graph in Kubernetes Platforms

As platforms scale, a single deployment path fans out across multiple artifact sources, each enforcing its own access and policy logic.

This fragmentation becomes more pronounced in multi-cluster, multi-team, and regulated environments. The same artifact may be permitted in one context but forbidden in another, with enforcement scattered across CI/CD pipelines, registries, and admission controllers.

Why Repository-Centric Control Is Not Enough

Most cloud-native platforms rely on repositories and registries as the primary control point for artifact access. While necessary, this model has clear limitations:

Repository-level ACLs lack runtime and deployment context, security policies are often duplicated across CI/CD systems and scanners, and promotion rules frequently vary by environment. The result is a patchwork of controls enforced at different stages of delivery, often too late to prevent risk or wasted work.

Kubernetes itself avoided this problem by centralizing authentication and authorization through the API server. Artifact access, by contrast, has no equivalent control plane.

Introducing the Artifact Access Plane

An artifact access plane provides a consistent layer that mediates artifact requests across the platform. Rather than embedding access logic into every tool, it evaluates requests based on identity, context, and policy before artifacts are consumed.

This plane does not replace registries or Kubernetes-native controls. Instead, it complements them by governing how artifacts are accessed across CI/CD systems, GitOps controllers, security scanners, and deployment pipelines.

Figure 2: Artifact Access Plane with Virtual Registry & Artifact Firewall Pattern

A common implementation of this model is the Virtual Registry. A Virtual Registry presents a logical, policy-aware view over one or more physical registries while remaining fully compatible with existing Kubernetes tooling. From the perspective of consumers, it behaves like a standard OCI endpoint. Behind the scenes, it centralizes and optimizes artifact flow across CI/CD systems, GitOps controllers, and clusters. In large Kubernetes environments, artifact pulls are among the most frequent external operations. Every pod restart, autoscaling event, and pipeline execution generates registry traffic. When those pulls traverse regions or depend directly on external services, they introduce latency, egress costs, and additional failure domains.

By intelligently caching artifacts, localizing access, and absorbing deployment spikes, a Virtual Registry reduces upstream load, minimizes cross-region egress, and improves startup and build performance. It also introduces resilience by decoupling platform operations from upstream registry availability, providing more predictable behavior during outages or rate limits. At the architectural level, this abstraction increases vendor independence, allowing platform teams to evolve or diversify registry backends without disrupting Kubernetes workloads. Rather than functioning solely as a policy checkpoint, the Virtual Registry becomes a foundational platform component that supports performance, scalability, resilience, and governance together.

Alignment with the CNCF Ecosystem

The CNCF ecosystem already provides many of the primitives required for this approach. OCI Distribution standardizes artifact transport, Sigstore enables signing and verification, Tekton Chains captures provenance, SPIFFE and SPIRE establish workload identity, and OPA or Gatekeeper express policy. What is missing is not capability, but orchestration.

These projects solve specific problems well, yet artifact access decisions are still made independently by each tool.

An artifact access plane composes these primitives into a shared control layer, reducing duplication while preserving openness and interoperability.

Why This Matters for Developer Velocity

Artifact access issues often surface as failed deployments caused by late policy checks, environment-specific inconsistencies, slow feedback loops in CI/CD, and manual promotion workflows between environments.

By enforcing access and trust constraints earlier and consistently, platforms reduce rework and improve predictability. Developers interact with a curated artifact space where compliance is implicit, not discovered at deploy time.

The result is faster iteration without sacrificing governance.

Conclusion

Kubernetes transformed cloud-native platforms by centralizing control and standardizing interfaces. As software supply chains grow more complex, artifact access faces a similar inflection point. Just as Kubernetes standardized how workloads are orchestrated, the next evolution of cloud-native platforms is standardizing how artifacts are governed, delivered, and optimized at scale. Treating artifact access as a first-class control plane brings performance, resilience, vendor flexibility, and trust into a coherent model. As software supply chains expand, this layer will increasingly determine whether platforms scale predictably or fragment under their own complexity.