Security for Containers — or, Containers for Security

There has been lots of discussion over the last couple of years about container security. Are containers secure? What are the vulnerabilities? What are the issues? Although these are some valid questions, this article will try to answer a different question: Can we use for containers for security? Our thesis is that transitioning application deployment to containers will increase security and decrease attack surface, compared to any non-container deployment. Indeed, containers are probably one of the best tools for application security, provided they are used properly.

First, we need to describe what containers provide:

Containers as Packaging Mechanism

Containers can package all the application artifacts and their dependencies in a reproducible, yet immutable format. The simplicity—and, at the same time, power—of containers is that they are creating a single binary that encapsulates an application and all of its dependencies. We obviously would not need container packaging if applications were statically compiled with no dynamic libraries. But unfortunately, other than the few Go programmers out there, the majority of development environments are a dependency hell. By packaging all the dependencies in a single binary, containers allow developers to reproduce the deployment environment of an application consistently. At the same time, this binary becomes immutable. An upgrade of a library means a new binary.

It is difficult to justify any argument that a consistent packaging mechanism and binary immutability can actually jeopardize security. It is probably the best tool we have in our arsenal to guarantee consistency and manage security risks. And containerization of applications provides us this tool.

Containers as a Runtime Environment (Sandbox)

Container runtimes are responsible for using the underlying operating system capabilities to create an isolated sandbox where an immutable container image can run. The goal of the runtime is to orchestrate all the isolation and namespace capabilities of Linux systems—and, now, Windows servers—and isolate applications. By orchestrating cgroups, capabilities, SELinux or apparmor profiles and seccomp filters, container runtimes take advantage of all the isolation properties of the Linux kernel to restrict what applications can and cannot do inside a system. The ideas of sandboxing applications always had their roots in improved security and are making their way now to browsers and desktop systems. See, for example, the Chromium or Firefox sandboxes.

As in the packaging case, a “sandboxed” application is always more secure than a non-sandboxed application. Let us take as an example a single nginx server. By default, an nginx deployment requires root access. A simple “apt-get install nginx” in an Ubuntu system will result in an nginx server running as root. And this is the component that is most vulnerable to attacks. When the same nginx server is run as a container, it does not run as a root anywhere other than the container itself. It does not have any access to the root filesystem; only the container filesystem. It actually can be limited to a read-only file system for increased isolation, and it can be monitored based on its isolation. Without a doubt, an nginx server running as a container is more secure than an nginx server running as a Linux process.

Containers for Security

The simple message in this article is that if you have any application running in any environment and you go through the process of transforming it into a container, you will improve the application security posture and reduce the attack surface. The benefits are both in the security and operational front, and in most cases outweigh the costs. Let us dive into this through the whole application life cycle:

Dependency and Vulnerability Management

In a non-container, environment applications are built in a CI/CD pipeline and eventually deployed in some server or virtual machine. Even when automatic image baking techniques such as packer are used, the image includes a whole set of packages and libraries that might or might not be necessary for your application. “Hardening” of the base image is a black-list (and often black-art) process. Start with a base Linux distribution and start removing services and packages that are not needed. Often in this process, there are dependencies in libraries, and some libraries remain there for basic packages that are needed for system operations.

Enterprises often will try to standardize on an base image, starting with a distribution and going through an interactive process of adding/removing packages until every applications needs are satisfied. The idea of the golden AMI (Amazon machine images) in AWS deployments is not far from this reality, either. There are two solutions if there is a vulnerability in a software package: You can either rebuild all images that contain that package to eliminate the problem or you can update those same images with a configuration management tool, like Chef or Puppet, and distribute those updated images to the infrastructure. The server goes into a state that nobody knows what it includes and you can hardly secure something that you do not know about.

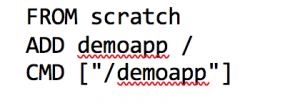

Let us contrast this with container packaging mechanisms. The container package mechanism enables you to move from a black-list model of manually disabling packages you don’t need to a white-list model of including only packages you actually do need. Here is a simple container image for a Go application:

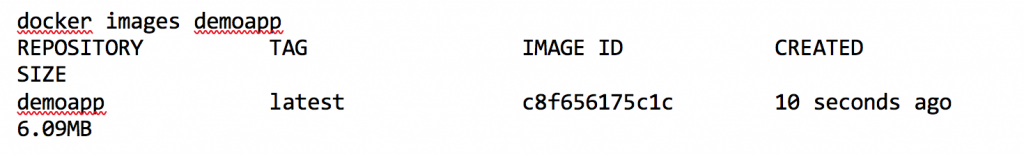

The basic operating system file system is “scratch.” This is a bare minimum file system that does not even have “sh” or “bash” installed. In this basic file system we just add the demoapp binary, and this is the only executable in this container. Even if there is a remote execution vulnerability in that binary, an attacker would not be able to find a shell to continue. If we list this image, we will see that it is actually only 6MB:

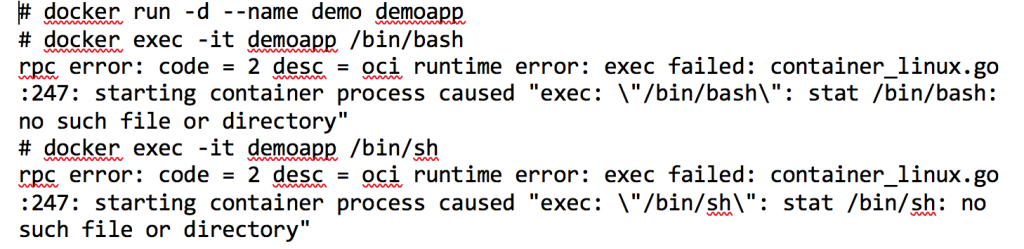

If we try to access a running container with this image, even with the docker exec command, we will end up with nothing:

Shooting Yourself in the Foot

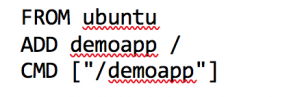

Just because containers offer the ability to properly manage image packaging does not mean everyone does it. Don’t blame the idea of containers for this, though. Let’s slightly modify this docker image:

In this modification, instead of starting to build the image from pretty much nothing, we started building the image from an ubuntu base image. This image is loaded with goodies including sh and bash and a whole bunch of utilities that might be needed in a test environment, but by no means are they needed to run the application. The result: You now have an image of 109Mbytes.

Crazy but real—103Mbytes of unnecessary binary code that will never be used by your application but is transferred around between clouds, checked into repos and constantly monitored. And most likely it’s only useful for attackers, who can access the utilities to take over your container. You want to avoid ShellShock? Don’t package “basη” with your application in the first place.

So, yes, you can use container packaging and shoot yourself in the foot. Or you can use it properly and take advantage of the immutability and white listing capabilities.

Continuous Vulnerability Monitoring

Once we have immutable application images with only their necessary components, it becomes extremely simple to manage and monitor vulnerabilities. We now have a “bill of materials” of the applications and can easily map this to known vulnerabilities. The bill of materials can be extended to the source code of the application when the build process is automated in the CI/CD pipeline. We have a very good idea of what is running and potentially can stop developers from shooting themselves in the foot by blocking containers that start from generic images.

Projects such as Grafeas are promising to provide a consistent metadata data store of status of applications, and with proper integration they can become powerful security and compliance tools.

In other words, by embracing container packaging we have achieved two things:

- We have moved to a white-list model of dependency declaration.

- We have streamlined the operation of creating inventories of artifacts and their associated vulnerabilities.

Even if the container runtime did nothing for us, by embracing containers and moving existing applications to containers, we have solved a significant problem in the deployment pipeline.

Operating System Upgrades

The discipline that the white-list packaging mechanism is enforcing solves another significant problem with operating system updates. We all have seen organizations that are troubled by the fact that their applications are running on a particular version of the OS (Centos 6.5, anyone?) Despite known vulnerabilities either on the basic kernel or one of the user land packages of the OS, IT organizations fail to follow standard upgrade practices because they are afraid “applications will fail”—and, in several cases, they will fail. These are failures of the dependency hell that we have gotten ourselves into. An application depends on a library, which depends on another library, which depends on something vulnerable. And since we cannot upgrade the OS for this application, we should not do it in our fleet of servers, because who can manage the vulnerabilities across all kinds of different versions? And then Spectre happens, and we have to backport changes to 5-year-old Linux kernels. This is the unfortunate reality of enterprise software.

Let us consider the alternative now, and let us assume that we can actually containerize this application. We can package all of its dependencies in a single container that does not really care about the dependencies on the underlying operating system. By doing that, we can let different applications at different stages of maturity have different dependencies. More importantly, we now have an inventory of all applications and their dependencies and we can streamline the life cycle of this inventory through a container registry. And we can now upgrade our operating system to the latest kernels and the latest distributions without affecting any of the applications. From a security and operational standpoint, this is the nirvana we have been all dreaming about.

Essentially, if you go through a “spring cleaning” process of transforming all your applications to containers, you will see extreme security and maintenance benefits even without taking advantage of the runtime capabilities or orchestration benefits or anything else from containers. It is a simple transition from a black-list security model to a white-list security model.

Sandboxing

The container runtime is essentially a sandboxing mechanism. Even you if you do not trust the Linux kernel as an isolation mechanism and you prefer to rely on hardware virtualization for isolation between workloads, by using the container runtime to deploy your applications you can isolate the applications from the rest of the operating system and other services required in the infrastructure.

Undeniably there are operational benefits from containers, microservices and orchestration that are above and beyond the security benefits. But let’s ignore them for the time being and focus on the container runtime as a sandoxing mechanism. Let’s assume you will continue running a single application per VM, but in this case, instead of running the application directly on the server, you decide to use the container runtime. What security benefits do you get out of this decision, provided that you are deploying the container with the most secure posture? Here’s a list:

- File system access: Your application will be restricted to the container filesystem and if it is stateless you can even choose to define this as a read-only filesystem. What this means is that if there is vulnerability leading to a remote execution, the attacker would just have access to the container filesystem and not your server filesystem. Even if the attacker manages a privilege escalation inside the container, it will be limited in the container.

- Resource usage limits: Your application can be restricted to specific resource usage. A denial of service attack or a fork-bomb will be limited inside the container and will not affect the other services in the server.

- Snapshots: Assuming that you detect that your application is under attack, you can easily pause it and create a snapshot that can be used for forensics to actually figure out what happened.

- Least privilege capabilities: By default your application will only have a limited set of Linux capabilities that you can restrict even farther if you know what it does. Any attempt to use a system call that requires higher capabilities is automatically stopped.

- Seccomp profiles for system calls: By restricting the system calls that your application can use, you further restrict the attack surface. Classic example, if you are application does not need to execute a shell (execve) disable the system call. Most remote attacks will attempt to take over a shell and they will blocked immediately.

- No service accounts: Common practice in service deployments is to create service accounts for every service running in a server and managing credentials and allocations. With a container instance, you don’t really need the service. By leveraging user namespaces you can make the installation even more robust by essentially mandating that the sandbox is always running as an unprivileged user.

- Network isolation: Your application only exposes specific ports that must be explicitly defined and with easy mechanisms you can restrict traffic. Again, this is a classic example is why your application needs to initiate outgoing traffic to the internet if it is a standard web server. This is the No. 1 method for connecting to remote control points and downloading attack payloads. It should be disabled by default.

All the above capabilities become available by default and by properly using them you end up with a reduced attack surface. One can argue, rightly so, that all the above capabilities existed in Linux system for sometime now, and properly configuring a Linux system would have the same result. The power of containers, though, is that they normalize all these configurations in a declarative file definition (the container manifest) and automation is simple. Again, it is matter of making systems secure by default and improving usability and discipline, rather than anything else.

Shooting Yourself in the Foot

The above, however, does not mean you can’t shoot yourself in the foot. For example, the docker API allows you to instantiate a container with root privilege that has all the capabilities of an admin, connected to the host network, without any network protections and with full access to the root filesystem of the underlying machine. Try this (or better, don’t try it, unless if you are curious):

docker run -it –privileged –net=host -v /:/roofs centos

This is a container shipwreck. If there is anything that you should never do, it’s the above command.

Achieving the Best Security Posture of the Runtime

The folks at docker have done an excellent job to outline the best practices in terms of security and actually provide a comprehensive tool that can help you identify insecure uses of the runtime. A simple script will quickly identify any issues in the deployment that result in insecure environments.

The FUD Factor

The FUD with container security is usually in the context. There are plenty of articles that describe “container attack vectors.” What they are really describing is shooting-yourself-in-the-foot scenarios. Let us illustrate some more of the FUD vectors with some examples:

Remote Launching a Container in Your Environment

- Attacker attacks a developer through the web browser.

- Web browser calls the docker API over a remote call to an IP address (that is unauthenticated).

- Attacker deploys a random container in one of your servers as privileged.

Correct deployment practices say that you should never enable the remote API of the docker daemon and, if you do, you should enforce some type of authorization. A simple tool such as the one above would have identified this risk immediately.

Remote Execution Attack in a Container with “Root” Access

- Attacker uses the Struts vulnerability (because its fashionable) and can execute remote code in your machine.

- Attacker has now root access.

Well, not really. First of all the attack is staged to a container with a bad image, meaning that the image is a generic image with shell access and a minimal image was not used as described above. Second, the attacker only has root access in your container because you have not deployed the container with user namespaces enabled. A tool such as docker bench would have warned you ahead of time that the container is not using user namespaces.

If you have followed best practices:

- The attacker would not be able to download a payload (since outgoing traffic to the internet would have been prevented).

- Even if your network policies are loose, the attacker would only get access inside the container and as a regular user.

- The attacker would have very limited privileges to do anything with the kernel.

As a challenge, you can also try this as an example of a proper installation: an excellent experiment by Jess Frazelle on providing tight container security. You get shell access in a container running in the cloud. Can you break it and capture the flag? contained.af

Using Containers for Security

The above discussion is hopefully making it evident that a properly containerized application is by far more secure than an application running by itself in any operating system manage. Both from a vulnerability and package management perspective as well as from a sandbox perspective, using a container for an application introduces discipline. And by introducing discipline you can avoid mistakes that often are the root cause of most attacks.

One could actually argue that the biggest security benefit of containers is to introduce and popularize a disciplined software development lifecycle process in software delivery.

Having said that, using containers for security is not solving all problems. The real problem that we are faced with is not the security of a single container, but rather the security of large-scale and constantly changing distributed systems. It is this area where containers are becoming an even more valuable tool.