Best of 2022: Big Data on Kubernetes: The End For Hadoop?

As we close out 2022, we at Container Journal wanted to highlight the most popular articles of the year. Following is the latest in our series of the Best of 2022.

When data sets are too large and/or too complex for traditional software to deal with, we refer to them as ‘big data.’ Organizations and businesses around the world need to use big data to work on projects that influence the way we live now and in the future.

Conversational AI companies want their bots to seem as natural as possible. To achieve this, they must process massive amounts of data in the most efficient and cost-effective way possible. That’s where open source ecosystems like Hadoop come in.

About Hadoop

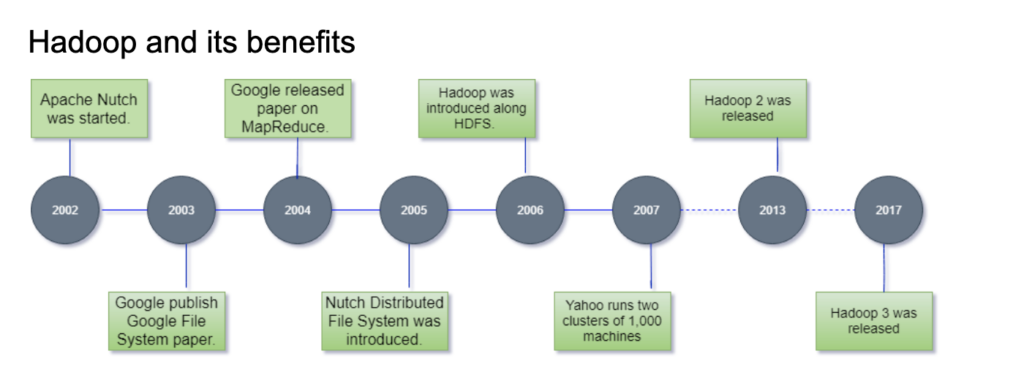

Hadoop is a Java-based framework that allows for the processing of big data. This open source framework first emerged in 2005. Apache developed it to support their Nutch search engine project. It doesn’t require specialized hardware; Hadoop can run on widely available and inexpensive commodity hardware.

Hadoop offers efficient and fast data analytics at a relatively low cost. This is thanks to the way it distributes storage and processing power across a network. Each node in that network can operate in parallel with all the other nodes. It can be installed locally or in cloud data centers.

The Benefits of Hadoop

Hadoop is a popular choice for organizations needing to use big data. Let’s take a look at some of the benefits that account for that.

- Low expenditure: Hadoop allows organizations to perform big data analytics without the need for expensive hardware and software.

- Accessibility: Lower cost allows more organizations to tap into the power of big data. It would be prohibitively expensive for many organizations without something like Hadoop.

- Data security: Thanks to automatic backups, Hadoop’s users can be confident their data is recoverable.

- Processing and storage capacity: Big data processing requires a lot of storage and processing capacity. Hadoop’s distributed computing model allows for that.

Hadoop’s Limitations

Since Hadoop debuted in the mid-2000s, there have been further technological strides made by other tech firms. Competition means Hadoop’s market share has somewhat dwindled. Let’s take a look at some of the reasons why this may be the case.

- Security: Hadoop doesn’t make security the highest of priorities. Users implement their own security manually or use a third-party option. Data is unencrypted.

- Not well-suited for smaller data sets: Hadoop is great at dealing with big data. When it comes to smaller data sets, however, it is left wanting.

- Steep learning curve: Inexperienced users find Hadoop challenging to learn. This is due to the fact it’s written in Java, which isn’t the best for dealing with big data.

- No real-time analytics: There is no native support for real-time analytics. This is a deal-breaker for many organizations nowadays.

Big Data and Kubernetes

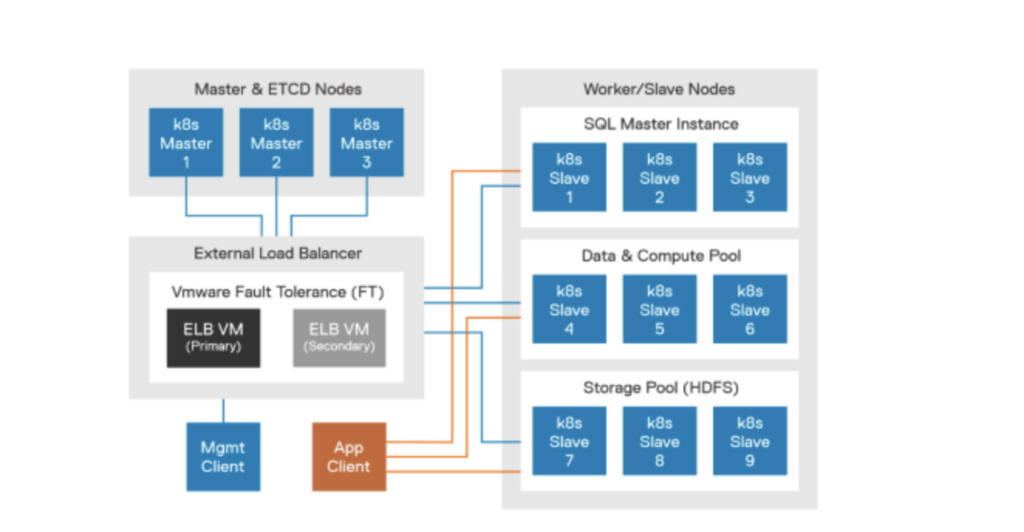

There are alternatives to Hadoop for processing big data. One of these is Kubernetes. Initially, it was used primarily for stateless services. Now, Kubernetes is growing in popularity amongst data analytics teams and for stateful workloads. Recent developments have allowed Kubernetes to become a useful tool in the field of big data. This is a boon for data scientists.

What is Kubernetes?

Kubernetes is an open source container orchestrator and a platform for developing cloud-native applications. It was developed and released by Google in 2014. Developers use it to automatically deploy, scale and manage containerized applications. Kubernetes is now maintained by the Cloud Native Computing Foundation (CNCF).

Why use Kubernetes to Support Big Data Software?

Kubernetes offers operations and infrastructure teams a lot of reliability and flexibility. It helps to streamline the deployment and management of containerized applications. Let’s look at why Kubernetes is well-suited to supporting big data software.

Easier to build: Containers and Kubernetes make building big data software much easier. It makes the process more reliable and, crucially, repeatable. It’s like using video templates for editing. This saves DevOps teams so much time. They can easily reuse containerized images.

They are able to use Kubernetes to safely test multiple versions of applications by utilizing containers. Thus making deploying and updating a streamlined process.

Cost savings: Kubernetes can help organizations take full advantage of cloud technologies. This means that many basic tasks can be taken care of through automation or by the cloud provider.

Data analytics at this scale can be very demanding on infrastructure. Kubernetes allows for the sharing of resources to make the process significantly more efficient. This is thanks to containerization, which allows the running of multiple applications using the same OS. It can do this while avoiding competition for resources and dependency conflicts. Combined with an easier build process, this enables a more cost-efficient approach to big data processing.

Portability: Using Kubernetes to manage containers allows DevOps teams to deploy applications anywhere. It eliminates the need for reconfiguring components to be compatible with varying software and hardware infrastructure. It enables the reproduction of the same stack across instance types, cloud regions or hardware generations.

Does Kubernetes Mark the End of Hadoop?

The answer is … maybe. Hadoop is too limited in terms of the tools it offers. It can’t match the flexibility made available by Kubernetes. With Hadoop, you’re forced to use Java. Kubernetes allows you to use any programming language you want.

Using containerized applications allows you to move to alternative cloud storage. Hadoop makes this impossible. Portability is vital. By using Kubernetes and the cloud, responsibility for basic maintenance falls to the cloud provider. This greatly improves cost-efficiency and stops team communication tools from being clogged up with complaints about repetitive maintenance tasks.

As a tool for efficient, cost-effective big data analytics, Hadoop is a great option. But as technology inevitably moves on, many organizations are finding that Kubernetes provides greater flexibility and efficiency. Containerization makes all the difference if you want repeatable processes. Like using email templates, it makes the most mundane tasks much easier.