AI-Driven Cloud Moderation in Kubernetes Clusters

This article builds directly on “Platform Team Metrics That Actually Matter: Beyond DORA,” which highlighted key performance indicators like deployment frequency and cost efficiency for platform teams. It extends those concepts by exploring AI tools that automate cloud resource moderation in Kubernetes, helping teams achieve those metrics at scale.

The Cost Challenge in Kubernetes Platforms

Kubernetes clusters enable dynamic scaling but often lead to unchecked cloud spend through orphaned resources, overprovisioned pods, and inefficient autoscaling. Platform engineers track metrics like mean time to recovery (MTTR) and change failure rate, yet cloud costs frequently exceed budgets by 30-50% in mature setups.

AI addresses this by analyzing usage patterns in real time, predicting waste, and enforcing policies without human intervention. Tools integrate with Kubernetes operators to right-size resources proactively.

Core AI Techniques for Moderation

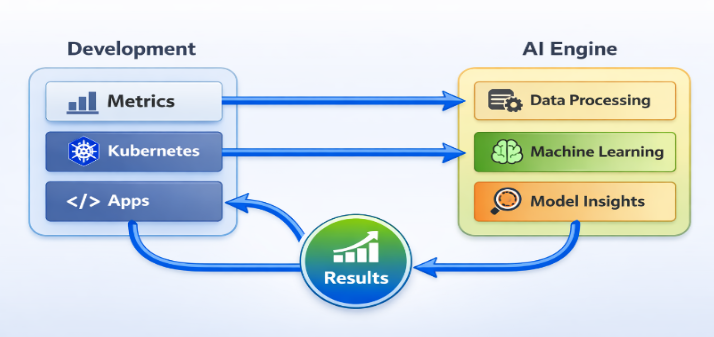

AI-driven moderation uses machine learning models trained on cluster telemetry from Prometheus or OpenTelemetry.

- Anomaly Detection: Models like isolation forests flag unusual spikes, such as a namespace consuming 200% expected CPU, triggering auto-scaling down.

- Predictive Scaling: Time-series forecasting (e.g., Prophet or LSTM) anticipates load based on historical data, preventing overprovisioning during off-peak hours.

- Resource Optimization: Reinforcement learning agents simulate pod placements to minimize costs while meeting SLAs, similar to Kubernetes’ descheduler but enhanced with AI.

These run as custom controllers in the cluster, querying cloud APIs like AWS Cost Explorer or GCP Billing.

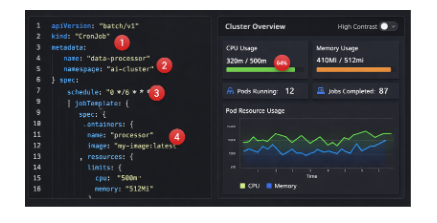

Practical Implementation Steps

Start by instrumenting your cluster for AI readiness.

- Deploy observability: Use kube-state-metrics and node-exporter to feed data into a vector database like Pinecone.

- Build AI pipelines: Leverage open-source frameworks such as Kubeflow for model training on cost data.

- Enforce via operators: Create a Custom Resource Definition (CRD) for “AIClusterBudget” that applies policies cluster-wide.

For example, an AI agent could detect idle nodes and evict them:

Text

apiVersion: ai-moderation.example.com/v1

kind: ClusterBudget

spec:

maxCost: “5000/month”

aiModel: “cost-forecaster-v2”

This ensures self-service compliance, aligning with platform goals of reducing toil.

Real-World Impact on Platform Metrics

Teams using AI moderation report 25-40% cost reductions. One enterprise cut AWS bills by $200K quarterly by automating spot instance bidding in EKS. Beyond DORA, this boosts flow efficiency developers focus on code, not tickets for resource approvals.

Metrics improve: Deployment frequency rises as guardrails prevent cost-related rollbacks, and reliability grows via predictive alerts.

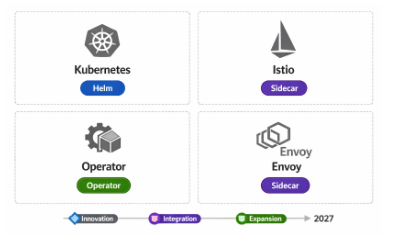

Vendor-Neutral Tools and Best Practices

Opt for open tools to stay agnostic:

| Tool | Function | Kubernetes Integration |

| KubeCost | Baseline cost allocation | Helm chart, Prometheus exporter |

| StormForge | AI optimization | Operator for experiments |

| CAST AI | Auto-scaling | Native K8s controller |

| Kubecost + MLflow | Custom models | Sidecar injection |

Best practices include starting small (one namespace), iterating via developer feedback, and treating the AI layer as a platform product with clear docs.

Monitor for AI drift retrain models quarterly on fresh data to maintain accuracy.

Future Directions

As Kubernetes evolves with eBPF and Wasm, AI moderation will incorporate edge inference for sub-millisecond decisions. Platform teams should prioritize this to meet 2026 mandates for sustainable engineering.

This approach turns cost metrics from reactive dashboards into proactive platform features, empowering engineers across the organization.