Build Cost Awareness Into Your Kubernetes IDP

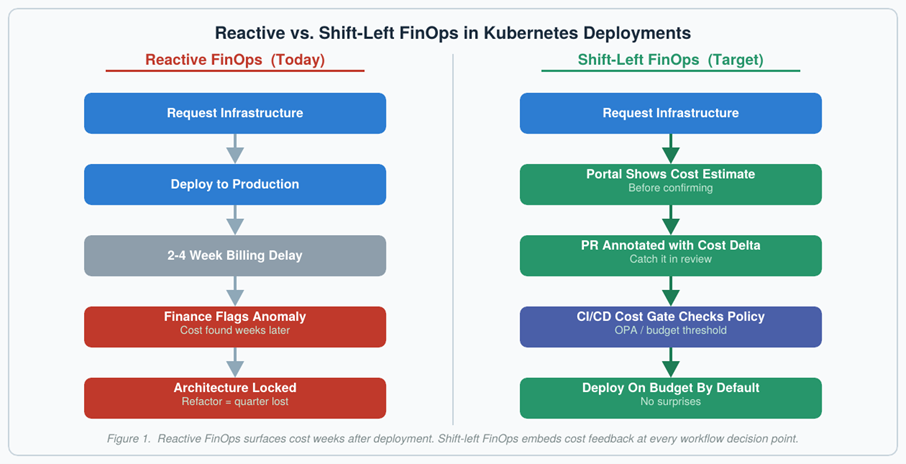

Your developers just shipped a Kubernetes service that will cost $47,000 a month to run. They found out three weeks after deployment when finance flagged the anomaly. By then, the architecture was locked, refactoring would delay the roadmap by a quarter and everyone was pointing fingers.

This scenario plays out constantly in organizations where FinOps and platform engineering operate as parallel disciplines. Kubernetes cost pressure has continued rising year over year across the industry. However, the real problem is not the cost itself — it is when teams discover it.

The Timing Problem

Traditional FinOps has a structural flaw. Cloud cost data often arrives with hours-to-day-scale latency, depending on the provider and reporting pipeline. Cost anomaly alerts fire after architectural decisions have already shipped to production. Developers have moved on. The connection between a specific Kubernetes configuration change and its cost impact becomes archaeological work.

Platform engineering has solved identical timing problems before. Security shifted left. Compliance shifted left. Testing shifted left. In each case, the pattern was the same: Embed the feedback loop into the developer workflow rather than surfacing it downstream where changes are expensive.

Cost is the one discipline that has not made that transition yet.

Golden Paths Already Encode This Logic

When a developer follows your platform’s golden path to deploy a new Kubernetes service, they inherit security controls by default. They do not need to become an expert in each domain to get a compliant deployment. That outcome is an emergent property of following the path.

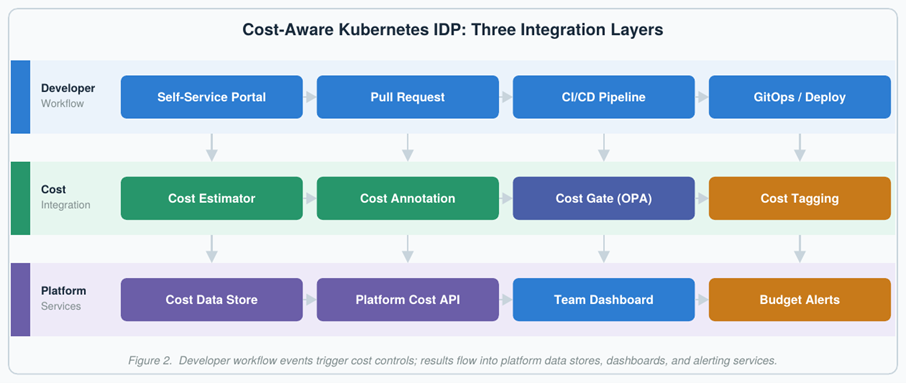

The same logic applies to financial efficiency. However, most internal developer platforms treat cost as an afterthought. A developer requesting infrastructure through a self-service portal sees CPU options, memory configurations and environment tiers. They almost never see what those choices will cost per month before confirming.

The gap is not technical. The integration points exist. The gap is organizational: FinOps teams own dashboards, platform teams own developer workflows and the two groups rarely design together.

Cost Visibility at the Point of Decision

The first integration point is the self-service portal. When a developer selects a node class or database tier, your platform should surface an estimated monthly cost before they confirm — not as a blocker, but as context. Tools such as Infracost and OpenCost provide the data layer; your IDP provides the interface. The key enhancement is pairing the estimate with comparables: A $2,400/month projection means little in isolation, but next to similar services run at $800–$1,200/month, it is immediately actionable.

Cost Annotations in Pull Requests

Infrastructure-as-code changes live in version control. When a developer modifies a Terraform module, Helm values file or Crossplane composition, your CI pipeline can compute the cost delta and post it directly to the pull request.

Tools such as Infracost’s GitHub Actions integration handle this automatically — comparing the current branch against main and posting a cost breakdown per resource. A configuration change that doubles compute costs becomes visible before it merges, not three weeks after it bills.

Cost Gates: From Visibility to Governance

Visibility alone does not prevent budget overruns. Some decisions warrant guardrails — policy checks that run during deployment and pause changes that exceed defined thresholds until the right approver weighs in.

Open policy agent works well here. A Rego policy can block Kubernetes deployments projecting over a team’s monthly budget without FinOps approval, or flag any new recurring cost above a set threshold for team lead review. Calibration matters: Gates set too low create friction engineers route around; gates set too high catch nothing. In practice, teams that embed cost guardrails earlier in delivery catch more outliers before they become budget surprises.

More sophisticated gates incorporate unit economics. A service costing $50,000 per month might be entirely justified at 100 million requests; the same spend at 100,000 requests is a problem. Connecting your cost gate to a Prometheus metric shifts the question from how much to how efficiently.

AI Workloads Demand a Different Cost Model

GPU instances cost 10–50x their CPU equivalents and LLM inference scales with token consumption rather than compute time. Golden paths for AI workloads should encode this: Time-sliced GPU access by default, dedicated allocation by exception and token budget governance built into the IDP rather than tracked in a spreadsheet.

Measuring Whether it Worked

Track two sets of signals. On the leading side: What percentage of deployments pass through cost-annotated PR review, and how often cost gates activate. A 2–5% gate activation rate typically signals policies are catching real outliers without blocking routine work. On the lagging side: Cost estimate accuracy against actual spend, and unit cost per request over time.

The Shift That Remains

FinOps and platform engineering arrived at the same Kubernetes infrastructure simultaneously and are only now realizing they need to design together. The shift-left playbook that transformed security, testing and compliance applies directly to cloud cost — the feedback loops just need to move earlier in the workflow.

The integration points already exist and the tooling is mature. What most organizations are missing is the deliberate wiring of cost data into the developer experience at the moments that matter: Portal provisioning, pull request review and deployment gates. Wire those three together and FinOps stops being a monthly reconciliation exercise — it becomes a continuous outcome of how your teams build software.